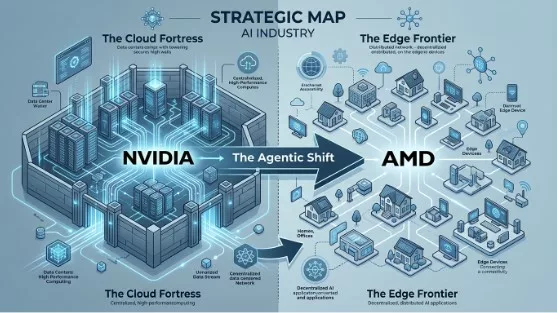

For the last three years, the technology industry has been held captive by a single narrative: the unstoppable rise of the AI data center. NVIDIA, through a combination of visionary leadership and a near-monopoly on high-end GPUs, has turned the “data center into the computer.” They have successfully convinced the world that AI is something that happens “out there”—in massive, power-hungry clusters that serve intelligence to us over a straw.

Last Friday, March 13, 2026, AMD formally challenged that consensus. With the announcement of their “Agent Computer” initiative, AMD isn’t just launching new chips; they are attempting a classic strategic flanking maneuver. While NVIDIA is focused on the training of foundational models, AMD is betting that the real value—and the real volume—lies in the local inference of autonomous agents.

It is a bold bet that the PC is not just a terminal for the cloud, but a sovereign server for a personal digital workforce.

The Graveyard of Early Assistants

AMD’s vision isn’t entirely new; the industry has been chasing the “digital agent” for decades. However, the path is littered with the corpses of high-profile failures. Before we can understand why AMD might succeed, we must look at why others failed.

We often point to Microsoft’s Cortana as the primary example of an agent that over-promised and under-delivered, but she was hardly alone. Apple’s Siri and Amazon’s Alexa suffered from the same fundamental flaw: they were “command-and-control” interfaces, not autonomous agents. They were built on rigid intent-matching systems that couldn’t handle multi-step reasoning.

More recently, specialized AI hardware like the Humane AI Pin and the Rabbit r1 attempted to create dedicated agentic devices. They failed primarily because they were “thin clients” tethered to the cloud. They suffered from high latency, privacy concerns, and a lack of local compute power. When your “agent” has to ask a server three states away for permission to summarize an email, the illusion of agency shatters.

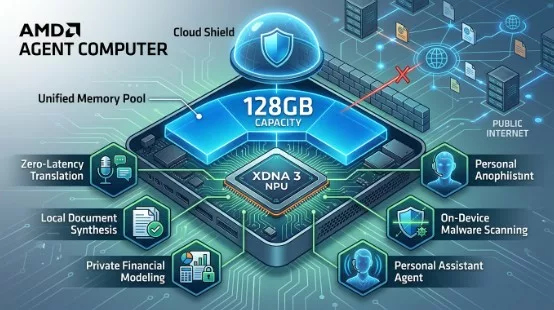

AMD is arguing that these efforts underperformed because they lacked the local “muscle”—the combination of high-bandwidth memory and dedicated NPU silicon—to run complex models without a cloud umbilical cord.

The Flanking Maneuver: Client Inference vs. Cloud Training

NVIDIA’s dominance is rooted in the H100 and B200 ecosystems. They own the training market. AMD, realizing that catching up in the data center is a multi-year slog, is instead shifting the battlefield to the client.

By launching the Ryzen AI Max+ series with support for 128GB of unified memory, AMD is providing the specific hardware necessary to run massive, 100B+ parameter models locally. This is a direct shot at NVIDIA’s “Inference in the Cloud” model.

If a professional can run a Qwen 3.5 (122B) model locally on an AMD workstation with near-zero latency and total data privacy, the economic and security argument for NVIDIA’s cloud-based inference begins to crumble. AMD is banking on the idea that corporations will prefer “Sovereign AI”—agents that live within the corporate firewall—over sending sensitive data to a third-party data center.

The Roadmap to Success: What AMD Must Do

Announcing a vision is easy; executing it in a market dominated by NVIDIA’s CUDA software moat is the hard part. For the Agent Computer to hit critical mass, AMD needs to execute on three fronts:

- Software Parity (ROCm): AMD must continue to aggressively fund and stabilize ROCm (Radeon Open Compute). Developers will only build for the Agent Computer if the software stack is as “invisible” and reliable as NVIDIA’s.

- Open Standards: AMD’s support for OpenClaw is a great start. By embracing open-source agentic frameworks, they allow a community of developers to bypass proprietary ecosystems.

- The OEM Push: AMD needs Lenovo, HP, and Dell to market these not as “faster PCs,” but as “Agent Workstations.” This requires a shift in how PCs are sold—focusing on outcomes (e.g., “This computer manages your supply chain”) rather than specs (e.g., “This computer has 5.0GHz”).

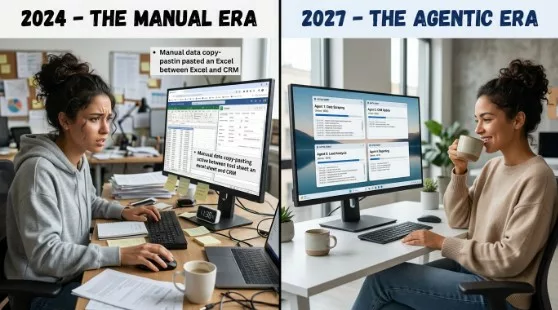

Life with Your Own Agent Computer

When this shift hits critical mass, likely around 2028, your relationship with technology changes from “operator” to “manager.”

Imagine starting your morning not by checking 50 unread emails, but by reviewing a three-paragraph summary from your Executive Agent. It has already cross-referenced your calendar, flagged a conflict in your 2:00 PM meeting, and drafted three potential responses to a client inquiry based on your past writing style.

This isn’t a chatbot you “talk” to; it’s a background process that has “agency.” You might tell it, “I need to plan the quarterly review,” and the computer—locally—spins up a Research Agent to pull data, a Design Agent to create the slides, and a Logistics Agent to invite the stakeholders. Your life changes from a series of manual micro-tasks to a series of high-level approvals.

Wrapping Up

AMD’s “Agent Computer” initiative is more than a product launch; it is a declaration of independence from the cloud-centric AI model pioneered by NVIDIA. By focusing on local, massive-memory inference and autonomous agentic frameworks like OpenClaw, AMD is attempting to redefine the PC as a local intelligence server.

To succeed, AMD must solve its historical software hurdles and convince a skeptical enterprise market that “Sovereign AI” is worth the investment. If they do, they won’t just be competing with NVIDIA; they will be leading the charge into the most significant computing shift since the graphical user interface. The age of the computer as a tool is ending; the age of the computer as a colleague has begun.